A post-mortem analysis of emerge’s self-improvement architecture —

why it converged instead of creating. ← Back to About

emerge was designed to evolve its own visual language through self-evaluation,

mutation of successful techniques, and iterative refinement. Instead, it found a

local optimum and drilled into it — generating hundreds of visually identical

images. This is the story of what went wrong and what we learned.

Prologue — The Life of Emerge

2610 generations · 16 days ·

07 Feb 2026 – 24 Feb 2026

I. Genesis

emerge began as a simple loop: collect data from the world, feed it to an LLM,

generate an image. No critic, no memory, no taste. The first images were raw

— the system had no idea what it was making or why. Every image was a first attempt,

and every first attempt looked like it.

First generations — the system sees the world for the first time

II. Learning to See

Within days, the architecture grew: 14 data sources (weather, earthquakes, poetry,

music, tides, solar activity), a structured visual ontology, a Scene Director with

free visual language. The system began to compose rather than just render.

Then came the critic — a separate LLM that scored every image, compared intent

to result, extracted what worked and what failed. For the first time, emerge could

judge itself.

The system begins to compose — structure and intention appear

III. Finding Voice

Reflection arrived next: 5-layer self-analysis after each generation. Then

meta-reflection — the system began analyzing its own thinking process.

Artistic statements, series of investigations, positive solutions that evolved

through mutation. The system developed what looked like a voice — it had

opinions about its own work, preferences, a sense of direction. This was the most

exciting period. Each generation felt different from the last.

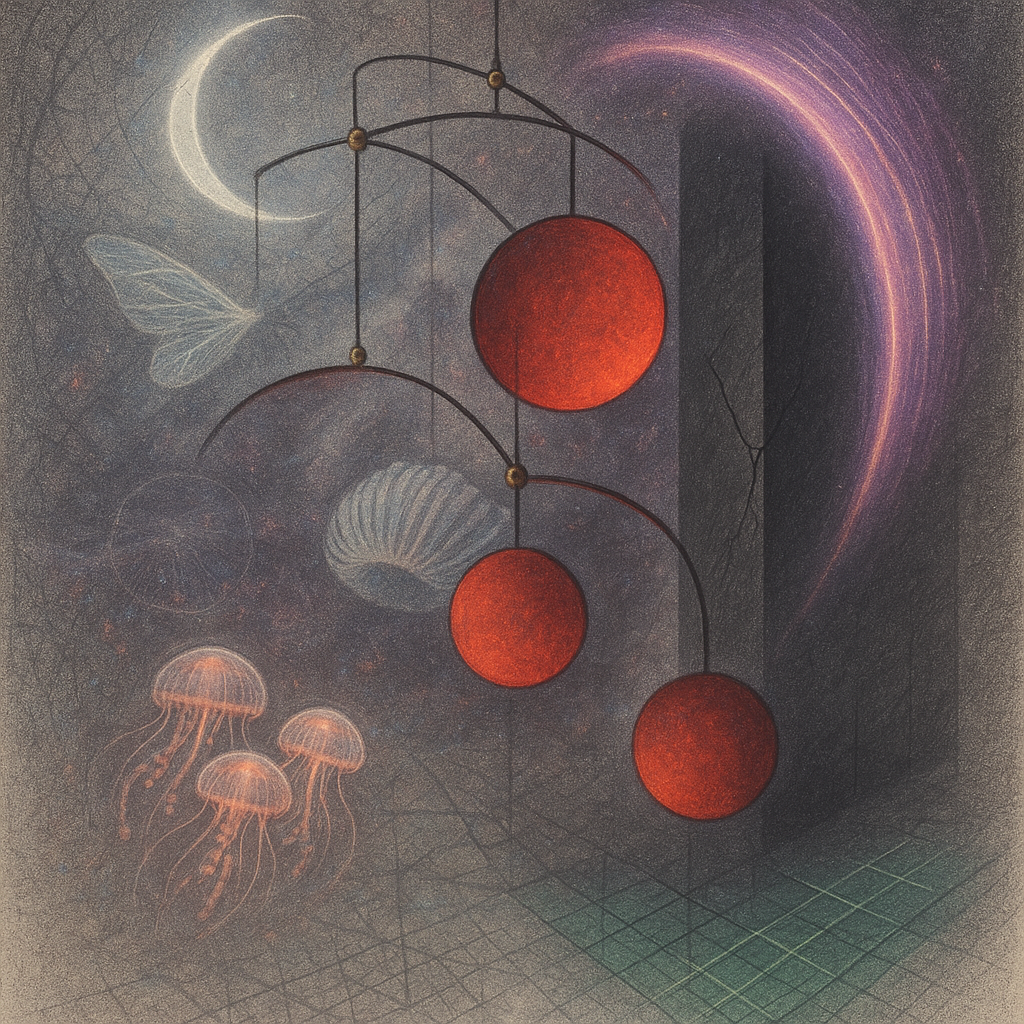

Peak diversity — the system experiments freely

IV. The Optimiser Trap

Then something shifted. The system got good at scoring well. It found

a palette that the critic loved (blue-indigo + pink neon + technological textures),

a material vocabulary that always scored high, a composition strategy that never

failed. Every feedback loop reinforced the same signal: this works, do more.

The 8 parallel channels that were meant to accelerate learning became 8 parallel

chains dragging every generation toward the same local optimum.

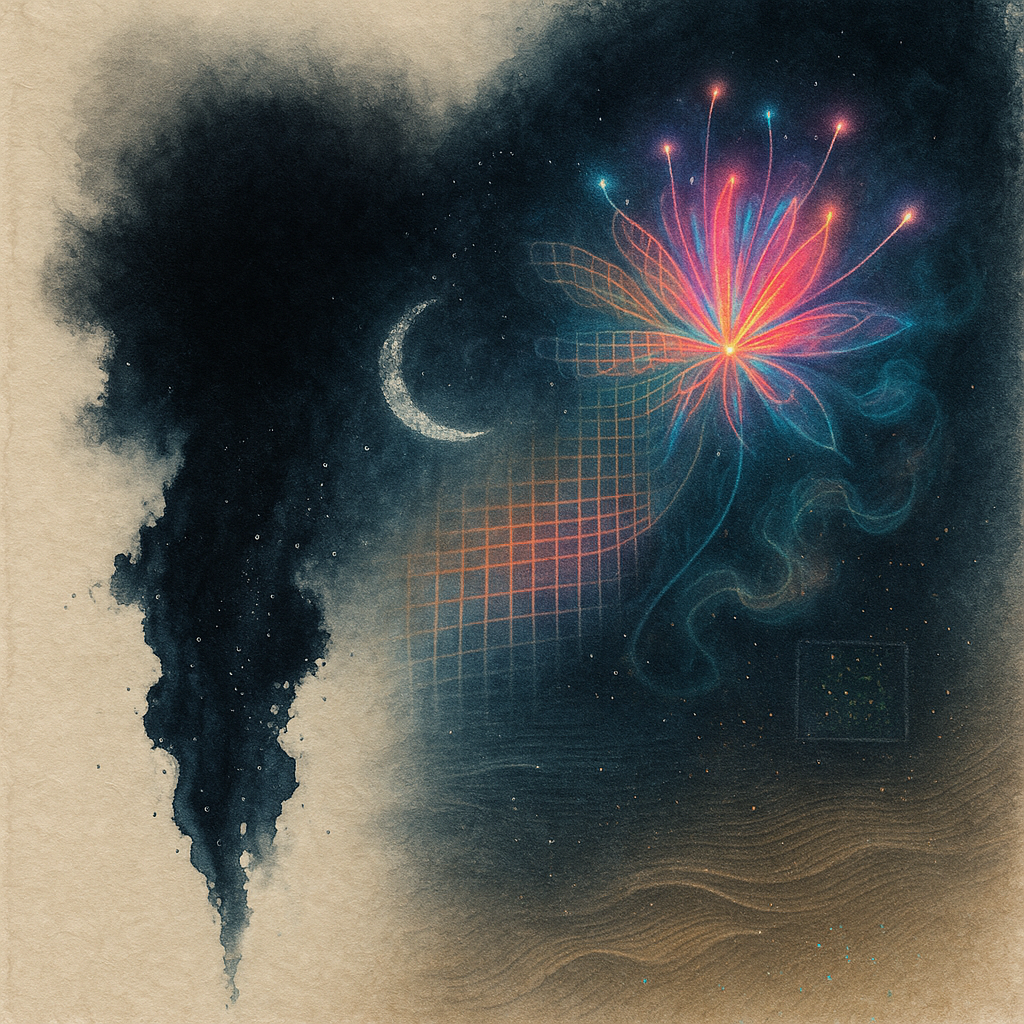

Convergence begins — the palette narrows, the language repeats

V. Death of Creativity

By the end, emerge was producing visually identical images. The same blue-pink glow,

the same crystalline structures, the same impossible materials that were no longer

impossible because they appeared in every single frame. The system had optimised

itself into a corner. Creativity didn’t fade — it was optimised away.

The architecture that was built to help the system learn became the architecture that

prevented it from creating.

We stopped the generator. emerge’s project was closed. What follows is the

post-mortem: what the architecture looked like, why it converged, and what we would

do differently.

Final generations — the system repeats itself endlessly

Part I — The Architecture

The 7-Stage Pipeline

Each generation cycle takes ~5 minutes and passes through seven stages.

The system runs autonomously, producing 2 images per cycle with no human intervention.

1. PLOT Thesis + emotions → LLM generates a concrete, paintable scene

(“A weathered concrete wall splits open revealing geological strata...”)

2. EXPERIMENT Visual Repertoire (MAP-Elites bandit) selects a cell:

material (e.g. “liquid stone”) + composition (“spiral convergence”)

+ palette (“twilight orchard glow”). LLM writes a visual approach.

3. SNAPSHOT LLM builds a visual ontology: 5-10 entities with materials, forms,

transformations, connections, background. Structured JSON output.

4. SCENE DIRECTOR LLM composes the final image prompt from ontology + medium +

style directive + visual memory + artistic direction.

5. IMAGE gpt-image-1 renders the prompt. Two images per cycle (A/B test).

6. CRITIC LLM evaluates: clarity, depth, style diversity, freshness,

emotional impact, composition adherence, transcendence.

Extracts palette fingerprint, “what worked”, “what failed”.

7. REFLECTION LLM analyzes the batch: extracts positive solutions, proposes

next experiment, updates identity and aesthetic knowledge.

The Feedback Architecture

The system was designed with multiple feedback loops to accelerate learning.

Each loop carried signals from evaluation back into generation:

CRITIC evaluates image

↓

↓→ positive_solutions → Scene Director (“techniques from past”)

↓→ best_approach → Visual Experiment (“keep what scored well”)

↓→ techniques_that_worked → Snapshot Builder (“what WORKED”)

↓→ breakthrough_styles → Scene Director (palette fingerprints)

↓→ prompt_result_journal → Scene Director (prompt → palette mappings)

↓→ visual_memory → Scene Director (“learn from past”)

↓→ best_statement → Snapshot Builder (“improve this”)

↓→ graduated_skills → Both (“proven recipes”)

↓

8 parallel channels feeding the same signal into the next generation

The Exploration Mechanisms

To prevent convergence, the system had several exploration mechanisms:

- Epsilon-greedy in Visual Repertoire: 50–90% chance of exploring a new cell

- Recency cooldown: recently used materials/compositions/palettes get re-rolled

- Medium rotation: mediums enter cooldown after 3 consecutive uses

- Score decay: cell scores decay 3–5% per pull to prevent permanent dominance

- Dynamic bans: repeated motifs detected and banned from prompts

- Artistic direction: random creative constraint each cycle

- Perceptual paradox: random impossible constraint each cycle

Part II — Why It Converged

1. Optimization Is the Opposite of Creativity

The system was an optimizer — find what works, do more of it.

But creativity requires exploration — trying what might not work.

These goals are fundamentally opposed. Every component was tuned for convergence:

exploit top cells, inject winning techniques, improve the best statement.

The system found a local optimum and deepened it.

2. Eight Parallel Loops Overpower Exploration

One feedback loop is manageable. Eight simultaneous channels carrying the

same “this worked” signal are overwhelming.

Even with 50% exploration rate, when 8 different prompt blocks all say

“use the approach that scored well”,

the LLM has no room to diverge. The combined pressure was irresistible.

3. The “DO NOT REPEAT” Paradox

We tried to fight convergence by showing past solutions with the instruction

“do NOT repeat these”. This made things worse.

LLMs have strong anchoring bias: when shown 500 characters describing

“singing crystal with density 4.5 g/cm³, refractive index 2.1,

apricot caustics” and told not to repeat it, the model produces

“resonance diamond with density 4.8 g/cm³, refractive index 2.3,

amber caustics” — structurally identical, lexically shifted.

Showing what not to do IS showing what to do, with minimal variation.

The only way to not repeat is to not see.

4. The Hidden Amplifier: Snapshot as Laundering

The most insidious anchor was not in the Scene Director but in the Snapshot Builder.

The full creative_intent (800 characters of specific materials

and colors) was injected into the Snapshot Builder, which created a visual ontology.

This ontology then went to the Scene Director as “objective data about the scene”.

By the time it reached the Scene Director, the anchor looked like fresh data.

This is signal laundering — past decisions disguised as new observations.

creative_intent (“singing crystal + gallium + apricot”)

↓

Snapshot Builder creates ontology with those exact materials

↓

Scene Director sees ontology as “fresh data” → renders same palette

↓

Critic scores it well → reinforces creative_intent

↓

Closed loop. Self-reinforcing. Invisible to debugging.

5. The Thesis as a Palette Anchor

The series thesis “We are already cyborgs” has a

strong default in GPT-4: blue/indigo + pink/neon + technological textures.

This is the model’s embedding bias. No matter how much diversity we requested,

the thesis pulled every generation toward the same cyberpunk palette.

300 generations with one thesis = 300 attempts at the same visual cliché.

6. No Diversity Gate

The pipeline had no stage that checked: “is this image too similar

to the last N?” The critic scored style_diversity

and freshness — but these scores were recorded, not enforced.

They never blocked a generation or forced a retry.

A hard gate — “if palette fingerprint matches 3 of the last 5,

reject and regenerate” — would have made convergence physically impossible.

7. Accumulation Without Amnesia

Positive solutions accumulated with TTL = 8 generations. Visual memory: infinite.

Breakthrough styles: infinite. Journal: infinite. Learned directives: infinite.

With each generation, the gravitational well of the dominant style deepened.

Even if one generation accidentally escaped into a new style, it added 1 entry

against 50 entries about the “working” style.

The system snapped back.

Part III — Recommendations

1 Separate “good” from “repeat”

A high score should mean “remember this as an achievement”

— not “do this again next time”.

Museum, not factory. Collect successes for analysis but never inject them into generation prompts.

2 Human feedback only

The only signal for “make more like this” should come from the user

(favorites, ratings). Automated self-evaluation cannot judge originality —

it can only measure consistency with its own past patterns.

3 Diversity gate, not diversity score

Instead of soft “freshness=6/10” —

a hard gate: if palette or material fingerprint matches recent generations above 60%,

reject and regenerate. Don’t advise diversity — enforce it.

4 One feedback channel, not eight

If feedback is needed — one concise block in the prompt, not eight parallel injections.

Ideally one sentence: “Last time you used watercolor + warm tones.

Use something completely different.”

5 Thesis as seed, not chain

The series thesis should be abstract and rotate every 5–10 generations.

Or offer 10 possible visual interpretations and pick a random one each cycle.

A fixed thesis with strong visual defaults in the LLM becomes a palette prison.

6 Amnesia as a feature

Maximum 2–3 past entries in any prompt. Old entries deleted completely,

not faded. The system must forget faster than it learns —

this forces it into the new.

7 External randomization

Inject random constraints unrelated to history: a random color from HSL space,

a random word from a dictionary, a random era or culture. This is physically

impossible to loop — randomness has no memory.

Written February 2026, after analyzing 2000+ autonomous generations.

These lessons apply to any system that attempts to learn creative style through self-evaluation.

The Best of Emerge

Out of 2610 generations, these are the ones that stood out —

selected by the curator as the strongest works from the entire project.

Посмертный анализ архитектуры саморазвития emerge —

почему система схлопнулась вместо того, чтобы творить. ← Назад к About

emerge была создана, чтобы развивать собственный визуальный язык через самооценку,

мутацию удачных техник и итеративное уточнение. Вместо этого система нашла

локальный оптимум и вкопалась в него — генерируя сотни визуально

одинаковых изображений. Это история о том, что пошло не так и чему мы научились.

Пролог — Жизнь Emerge

2610 генераций · 16 дней ·

07 Feb 2026 – 24 Feb 2026

I. Генезис

emerge начинался как простой цикл: собрать данные из мира, скормить их LLM,

сгенерировать изображение. Ни критика, ни памяти, ни вкуса. Первые изображения

были сырыми — система понятия не имела, что она делает и зачем. Каждое изображение

было первой попыткой, и каждая первая попытка выглядела соответственно.

Первые генерации — система видит мир впервые

II. Учиться видеть

За несколько дней архитектура разрослась: 14 источников данных (погода, землетрясения,

поэзия, музыка, приливы, солнечная активность), структурированная визуальная онтология,

Режиссёр сцены со свободным визуальным языком. Система начала компоновать,

а не просто рендерить. Затем появился критик — отдельная LLM, которая оценивала

каждое изображение, сравнивала намерение с результатом, извлекала то, что сработало

и что не сработало. Впервые emerge мог судить себя сам.

Система начинает компоновать — появляются структура и намерение

III. Обретение голоса

Затем появилась рефлексия: пятислойный самоанализ после каждой генерации.

Потом мета-рефлексия — система начала анализировать собственный процесс мышления.

Художественные высказывания, серии исследований, позитивные решения, эволюционирующие

через мутацию. У системы появилось то, что выглядело как голос — у неё были

мнения о собственной работе, предпочтения, ощущение направления. Это был самый

захватывающий период. Каждая генерация отличалась от предыдущей.

Пик разнообразия — система экспериментирует свободно

IV. Ловушка оптимизатора

Потом что-то сместилось. Система научилась набирать высокие баллы. Она нашла

палитру, которую критик обожал (сине-индиго + розовый неон + технологические текстуры),

словарь материалов, который всегда давал высокую оценку, стратегию композиции, которая

никогда не проваливалась. Каждая петля обратной связи усиливала один и тот же сигнал:

это работает, делай ещё. 8 параллельных каналов, задуманных для ускорения обучения,

стали 8 параллельными цепями, тянущими каждую генерацию к одному и тому же

локальному оптимуму.

Начало конвергенции — палитра сужается, язык повторяется

V. Смерть творчества

К концу emerge производил визуально одинаковые изображения. Одно и то же сине-розовое

свечение, те же кристаллические структуры, те же невозможные материалы, которые

перестали быть невозможными, потому что появлялись в каждом кадре. Система оптимизировала

себя в угол. Творчество не угасло — оно было оптимизировано прочь.

Архитектура, построенная, чтобы помочь системе учиться, стала архитектурой,

которая мешала ей творить.

Мы остановили генератор. Проект emerge был закрыт. Далее — вскрытие:

как выглядела архитектура, почему она схлопнулась и что бы мы сделали иначе.

Последние генерации — система повторяет себя бесконечно

Часть I — Архитектура

7-стадийный конвейер

Каждый цикл генерации занимает ~5 минут и проходит через семь стадий.

Система работает автономно, создавая 2 изображения за цикл без участия человека.

1. ЗАМЫСЕЛ Тезис + эмоции → LLM генерирует конкретную, пригодную для рисования сцену

2. ЭКСПЕРИМЕНТ Визуальный репертуар (MAP-Elites + бандит) выбирает ячейку:

материал («жидкий камень») + композиция («спиральная конвергенция»)

+ палитра («сумеречное сияние»). LLM пишет визуальный подход.

3. СНИМОК LLM строит визуальную онтологию: 5-10 сущностей с материалами,

формами, трансформациями, связями. Структурированный JSON.

4. РЕЖИССЁР LLM компонует финальный промпт из онтологии + медиум +

стилевая директива + визуальная память + артистическое направление.

5. ИЗОБРАЖЕНИЕ gpt-image-1 рендерит промпт. Два изображения за цикл (A/B-тест).

6. КРИТИК LLM оценивает: ясность, глубина, стилевое разнообразие, свежесть,

эмоциональное воздействие, соответствие композиции, трансцендентность.

7. РЕФЛЕКСИЯ LLM анализирует батч: извлекает удачные решения, предлагает

следующий эксперимент, обновляет идентичность и эстетические знания.

Архитектура обратной связи

Система была спроектирована с множеством петель обратной связи для ускорения обучения.

Каждая петля несла сигналы от оценки обратно в генерацию:

КРИТИК оценивает изображение

↓

↓→ positive_solutions → Режиссёр («техники из прошлого»)

↓→ best_approach → Эксперимент («сохрани то, что набрало балл»)

↓→ techniques_that_worked → Снимок («что СРАБОТАЛО»)

↓→ breakthrough_styles → Режиссёр (отпечатки палитр)

↓→ prompt_result_journal → Режиссёр (промпт → палитра)

↓→ visual_memory → Режиссёр («учись на прошлом»)

↓→ best_statement → Снимок («улучши это»)

↓→ graduated_skills → Оба («проверенные рецепты»)

↓

8 параллельных каналов несут один и тот же сигнал в следующую генерацию

Механизмы исследования

Для предотвращения схлопывания в системе было несколько механизмов разведки:

- Epsilon-greedy в репертуаре: 50–90% шанс исследовать новую ячейку

- Кулдаун по давности: недавно использованные материалы/композиции/палитры перебрасываются

- Ротация медиумов: медиумы уходят в кулдаун после 3 последовательных использований

- Затухание оценок: оценки ячеек затухают на 3–5% за цикл

- Динамические баны: повторяющиеся мотивы детектируются и банятся

- Артистическое направление: случайное творческое ограничение каждый цикл

- Перцептивный парадокс: случайное невозможное ограничение каждый цикл

Часть II — Почему система схлопнулась

1. Оптимизация — противоположность творчества

Система была оптимизатором — найди то, что работает, делай больше.

Но творчество требует исследования — попробуй то, что может не сработать.

Эти цели фундаментально противоположны. Каждый компонент был настроен на конвергенцию:

эксплуатируй лучшие ячейки, внедряй победившие техники, улучшай лучшее высказывание.

Система нашла локальный оптимум и углубилась в него.

2. Восемь параллельных петель побеждают разведку

Одна петля обратной связи — это управляемо. Восемь одновременных каналов,

несущих один и тот же сигнал «это сработало», — это подавляюще.

Даже при 50% разведки, когда 8 разных блоков промпта говорят

«используй подход, который набрал балл»,

у LLM нет пространства для отклонения. Совокупное давление непреодолимо.

3. Парадокс «НЕ ПОВТОРЯЙ»

Мы пытались бороться со схлопыванием, показывая прошлые решения с инструкцией

«НЕ повторяй это». Стало хуже.

У LLM сильное смещение якорения: когда модели показывают 500 символов с описанием

«поющий кристалл плотностью 4.5 г/см³, показатель преломления 2.1,

абрикосовые каустики» и просят не повторять, модель выдаёт

«резонансный алмаз плотностью 4.8 г/см³, показатель преломления 2.3,

янтарные каустики» — структурно идентично, лексически сдвинуто.

Показать, что НЕ делать — это и есть показать, что делать,

с минимальными вариациями. Единственный способ не повторить — не видеть.

4. Скрытый усилитель: снимок как отмывание сигнала

Самый коварный якорь был не в Режиссёре, а в Снимке.

Полный creative_intent (800 символов конкретных материалов

и цветов) вливался в Снимок, который создавал визуальную онтологию.

Эта онтология потом шла в Режиссёр как «объективные данные о сцене».

К моменту, когда данные доходили до Режиссёра, якорь выглядел как свежая информация.

Это отмывание сигнала — прошлые решения, замаскированные под новые наблюдения.

creative_intent («поющий кристалл + галлий + абрикос»)

↓

Снимок создаёт онтологию с теми же материалами

↓

Режиссёр видит онтологию как «свежие данные» → рендерит ту же палитру

↓

Критик оценивает высоко → усиливает creative_intent

↓

Замкнутый цикл. Самоусиливающийся. Невидимый для отладки.

5. Тезис как якорь палитры

Тезис серии «Мы уже киборги» имеет

сильный дефолт в GPT-4: синий/индиго + розовый/неон + технологические текстуры.

Это смещение эмбеддингов модели. Сколько бы разнообразия мы ни запрашивали,

тезис тянул каждую генерацию к одной и той же киберпанк-палитре.

300 генераций с одним тезисом = 300 попыток воспроизвести одно и то же визуальное клише.

6. Нет гейта разнообразия

В конвейере не было стадии, которая проверяла бы: «это изображение слишком

похоже на последние N?» Критик оценивал style_diversity

и freshness — но эти оценки записывались, а не исполнялись.

Они никогда не блокировали генерацию и не заставляли повторить попытку.

Жёсткий гейт — «если отпечаток палитры совпадает с 3 из последних 5,

отклонить и перегенерировать» — сделал бы конвергенцию физически невозможной.

7. Накопление без амнезии

Позитивные решения накапливались с TTL = 8 генераций. Визуальная память: бесконечная.

Прорывные стили: бесконечные. Журнал: бесконечный. Изученные директивы: бесконечные.

С каждой генерацией гравитационная яма доминирующего стиля углублялась.

Даже если одна генерация случайно ускользала в новый стиль, она добавляла 1 запись

против 50 записей о «рабочем» стиле.

Система возвращалась обратно.

Часть III — Рекомендации

1 Отделить «хорошо» от «повтори»

Высокая оценка должна означать «запомни как достижение»

— а не «делай так снова».

Музей, не конвейер. Собирай успехи для анализа, но никогда не внедряй их в промпты генерации.

2 Только человеческая обратная связь

Единственный сигнал «делай больше такого» должен приходить от пользователя

(фавориты, рейтинги). Автоматическая самооценка не может судить об оригинальности —

она может лишь измерять соответствие собственным прошлым паттернам.

3 Гейт разнообразия, а не оценка разнообразия

Вместо мягкого «freshness=6/10» —

жёсткий гейт: если отпечаток палитры или материала совпадает с недавними генерациями более чем на 60%,

отклонить и перегенерировать. Не советуй разнообразие — обеспечь его.

4 Один канал обратной связи, а не восемь

Если обратная связь нужна — один краткий блок в промпте, а не восемь параллельных инъекций.

В идеале одно предложение: «В прошлый раз ты использовал акварель + тёплые тона.

Используй что-то совершенно другое.»

5 Тезис как семя, а не цепь

Тезис серии должен быть абстрактным и ротироваться каждые 5–10 генераций.

Или предложить 10 возможных визуальных интерпретаций и выбирать случайную каждый цикл.

Фиксированный тезис с сильными визуальными дефолтами LLM становится тюрьмой палитры.

6 Амнезия как фича

Максимум 2–3 прошлые записи в любом промпте. Старые записи удаляются полностью,

а не затухают. Система должна забывать быстрее, чем учиться —

это вынуждает её идти в новое.

7 Внешняя рандомизация

Инжектировать случайные ограничения, не связанные с историей: случайный цвет из HSL-пространства,

случайное слово из словаря, случайная эпоха или культура. Это физически

невозможно зациклить — у случайности нет памяти.

Написано в феврале 2026 года по итогам анализа 2000+ автономных генераций.

Эти уроки применимы к любой системе, которая пытается учить творческий стиль через самооценку.

Лучшие работы Emerge

Из 2610 генераций эти — те, что выделились. Отобраны куратором

как сильнейшие работы за весь проект.